What are bad bots?

As the name suggests, bots are automated programs which imitate humans in the online world. The number of bots has increased exponentially over the last few years which has raised an alarm. According to some sources the internet consists of about 60% of bots. One can categorize bots into two parts, good bots, and bad bots. Among the good bots, you might know about Apple’s Siri and Cortana from Microsoft. These bots often operate with the help of AI. They are primarily programmed to assist human users and to optimize the working of online services.

However, the bad bots are the ones causing trouble throughout the internet. The definition of bad bots states that they crawl through websites to obtain information without any consent. They use the information to gain a competitive edge against the competitors or to sell it to earn money. Blackhat hackers are known to program bots that can undertake activities such as theft and fraud which are outright illegal. They often indulge in collecting and harvesting data, competitive data mining, spam, and fraud, to name a few. The difference between the bad and good bots is their function. Bad bots are available for hire and several businesses actually hire them.

What are bad bots/fake bots capable of?

Bad bots are capable of choking the servers and in certain cases turn the computers into zombie machines. They can crawl through hundreds of websites and obtain data and steal the content of websites. They tend to identify older plugins and software and use their vulnerability to gain an unauthorized advantage. Sites with unique contents, login pages, web forms and payment portals are the ones that the bots commonly target. Scraper bots are common, and they can easily extract important information from login pages and web forms. Web scraping is just one way bad bots harm your website. There are various other methods such as skewing, denial of service, credential cracking, credential stuffing, ad fraud, vulnerability scanning, footprinting, fingerprinting and spamming.

Bots responsible for DDoS attacks:

Denial of Service attacks is quite common, and we have seen a number of such large-scale attacks. One of the largest such attacks was in September of 2016, which also unveiled a new type of botnet name Mirai. After that, GitHub faced the largest ever recorded DDoS attack. Such types of DDoS attacks take place with the help of computers that are taken over by bots. The bots infect the systems of unsuspecting users and slow the systems down. After infecting the systems, the hackers can control them at their will. These infected computers are used to mount a DDoS attack on a website and bring them down. During the attack on GitHub, the peak traffic went up to one and a half terabits every second. This is evidence of the fact that bots are capable of taking over millions of computers.

Footrpinting and scraping bots:

Credential cracking is an easy way to get access to personal accounts. Bots can obtain usernames by scraping through login pages and web forms. After obtaining they just need to use brute forcing technique to get the password. This also leads to credential stuffing. When the bots have the usernames and passwords, they enter these credentials into the login pages. Bots can do it a lot faster than humans and can authenticate the login on a number of pages.

Companies hire pen testers to check for any vulnerability in their websites so that they can plug those. While malicious hackers use these weaknesses to gain access to the websites and cause damage. To find these vulnerabilities, hackers use bots designed to scan the websites. Footprinting is also a similar kind of process. Instead, they work on applications. The bots use the applications and make them face situations the applications would not face when a normal user uses it.

Spamming bots:

Spamming is one of the most common ways bots harm the websites and the user. Bots may be filing up your website with dubious content and codes. They also target forms and databases and fill in malicious data to consume resources and to pollute the site. These bots are easy to obtain, and one needs just to pay a few bucks to get them from any hacker. If a website is popular, then the bots will create and insert backlinks within the site to divert unsuspecting users to sinister websites and malware infected sites.

Origins of bad bots:

Bots have overrun the internet, and the number of bots keeps increasing. Good bots originate when companies use them for obtaining user data to make the services faster and more efficient. Facebook and Google use bots to scrape through websites and user data to obtain important information. However, bad bots originate when hackers program bots to obtain information through unlawful ways. There are various kinds of bad bots including spam bots, click bots, and spy bots, to name a few. Any bot can be programmed to serve the needs of the attacker or the corporation funding the bot.

If we look into the country-wise distribution of the origination of bad bots, America comes on top. The United States produces about 50% of the bots, and China leads regarding bot traffic. However, the origination of bots cannot be tracked since they are difficult to track and they remain hidden most of the time. One can also buy malicious bots from appropriate markets for a certain fee.

How to detect bad bots:

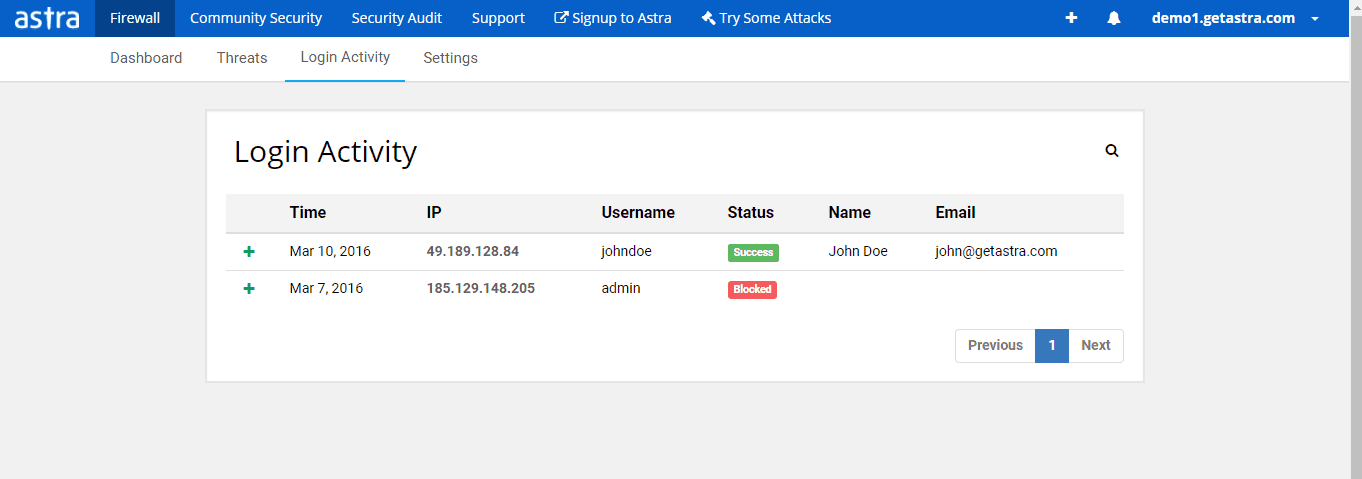

There are different ways to spot different bots since they have different attributes and functions. You can identify a web scraping bot by analyzing the behavior of your competitor or finding links to your content in unfamiliar locations. There was a report that Jeff Bezos used bots to track the prices of products on Diapers.com. One can use such cases as guides to identify bots. To identify skewing or credential cracking bots, you need to analyze the traffic and the login attempts. If you see an unnatural number of login attempts form a particular location, then it is probably a bot trying to gain access.

Bots indulging in ad fraud constantly try to load pages with fake ads. They redirect users to fake websites to earn from search keywords. If you see any IP address accessing each page in your website systematically, then it is probably a bot scanning for vulnerability. For identifying spamming bots, you need to take a look at your comments section. Spams generally appear as comments or links that redirect the visitors. If visitors to your site are complaining about spam emails, then a spam bot exists on your website.

How to protect your Magento, OpenCart & PrestaShop against bad bots?

Identification of the bots is the first step in protection. Once you have identified the IP in cases of certain bots, you can block the IP.

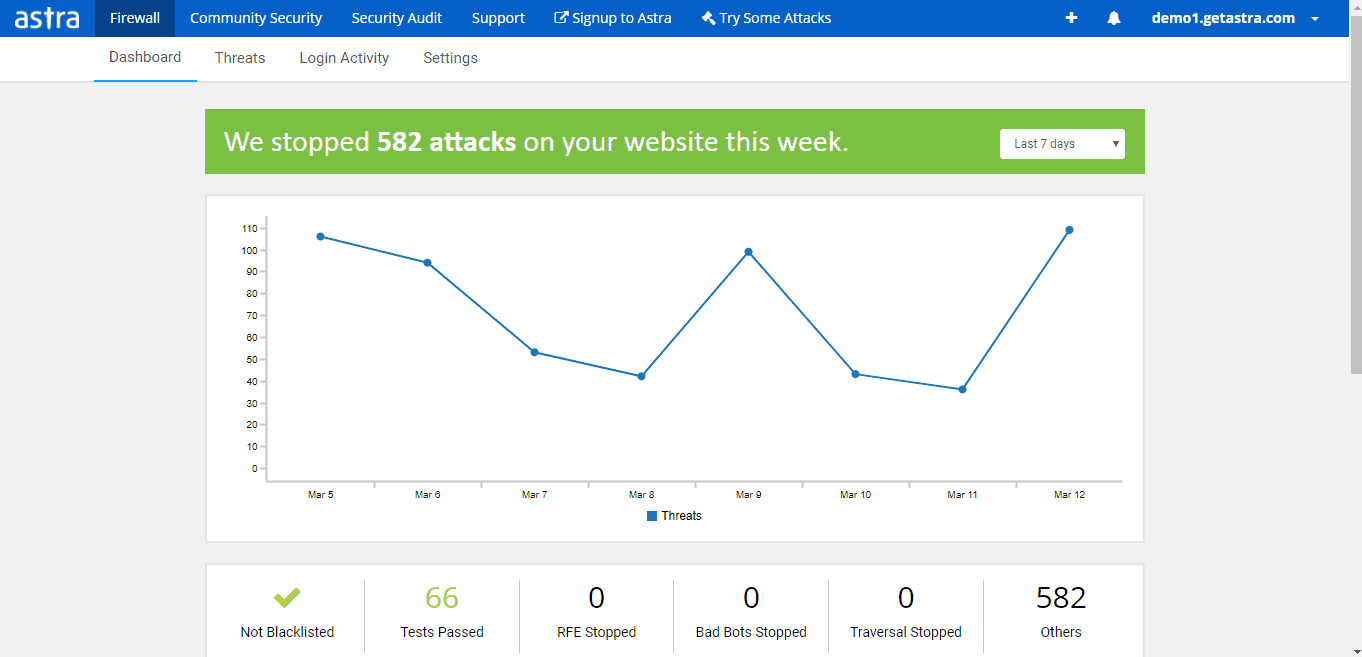

Using CAPTCHA is another new method to stop bots. Though if you use too many CAPTCHAs here is a chance of losing visitors. Learning the habits of bots will help you to identify them and take the appropriate steps. Web protection services like Astra are the best bad bots protection. Astra specialize in identifying and eliminating bots. Features such as automatic blocking of hackers, DDoS protection, blocking bots from stealing content and protection against bad bots, help in keeping the websites safe. You can also go through the firewall data sheet from Astra to safeguard your website.